Disclaimer: This post has been translated to English using a machine translation model. Please, let me know if you find any mistakes.

Caffeine is a software to prevent our Linux from locking the screen and then going to sleep. To do this, we will give it caffeine.

Installation

The installation is very simple, we just have to do

sudo apt update

sudo apt install caffeineInfinite Caffeine

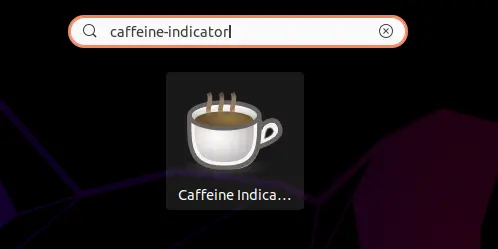

If we press the windows key on the keyboard and type caffeine-indicator, the option to start the software indicator will appear.

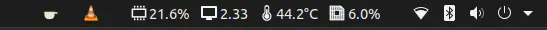

If we run it, a software indicator will appear in the top bar of Ubuntu

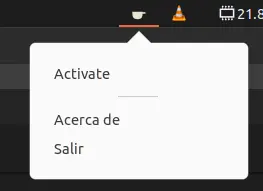

If we click on the indicator, a menu will appear.

Now we can click on Activate so that our Ubuntu will have infinite caffeine and never go to sleep.

Caffeine for a Command

One thing that happens to me is that when I train a neural network, I like to keep the model evaluation in view, even if I'm not at the computer. But if that training takes hours, at some point the screensaver activates and you can no longer see the training progress. So to fix this, we have caffeinate, which by doing

`caffeinate` COMMANDWe will make our computer have caffeine and not fall asleep while running the command COMMAND